China and Greece Strengthen Maritime Ties for a Green Future

China and Greece pledge to expand cooperation in shipping finance and green development following the Posidonia 2026 exhibition in Athens.

Chinese Renewable Energy: A Global Catalyst in the Fight Against Climate Change

Affordable renewable energy solutions from the Chinese mainland are driving a global transition away from fossil fuels amid intensifying climate concerns.

China and Russia Strengthen Strategic Ties: VP Han Zheng Meets President Putin

Chinese Vice President Han Zheng and Russian President Vladimir Putin met in St. Petersburg to reinforce bilateral cooperation and global strategic stability.

Ebola Outbreak in DR Congo: Confirmed Cases Rise to 452 with 82 Deaths

The DR Congo health ministry reports Ebola cases have risen to 452 with 82 deaths, noting rapid community transmission in Ituri and North Kivu.

US-Iran Peace Negotiations Hit Deadlock Over Lebanon and Frozen Assets

Peace talks between Washington and Tehran remain stalled as regional conflicts in Lebanon and disputes over $24 billion in frozen assets create a diplomatic impasse.

PLA Monitors Dutch Frigate Transit Through Taiwan Strait

The PLA Eastern Theater Command monitors Dutch frigate HNLMS De Ruyter during its transit through the Taiwan Strait and illegal activity in the South China Sea.

Diplomatic Deadlock: Putin Sets Conditions for Meeting with Zelenskyy

President Putin demands a peace framework before meeting President Zelenskyy, while Ukraine makes significant strides toward EU accession negotiations.

China Leads Global Maritime Shift with Greener, Smarter Shipbuilding

A new report released at Posidonia 2026 highlights China’s transition toward greener and smarter shipbuilding, driving global maritime low-carbon transformation.

Record-Breaking Delivery: China Sets Global Milestone with Two Mega Oil Tankers

China sets a global record in Dalian with the simultaneous delivery of two independently designed 306,000-deadweight-tonne mega oil tankers, the EVROS and ACHELOOS.

SpaceX and Google Ink Blockbuster AI Computing Deal Ahead of Historic IPO

SpaceX secures a massive AI computing agreement with Google, paving the way for its record-breaking $1.8 trillion IPO scheduled for June 12.

Cyberpunk Vistas and Spicy Flavors: Why Chongqing is a Must-Visit in 2026

As travel to the Chinese mainland becomes more accessible, Chongqing is captivating global visitors with its cyberpunk skyline, buzzing streets, and spicy cuisine.

China Advocates for Equitable Global Governance at 29th SPIEF

Vice President Han Zheng promotes the Global Governance Initiative at the 29th SPIEF, calling for genuine multilateralism and a more equitable international system.

US and Iran Exchange Strikes Near Strait of Hormuz and Gulf States

US Central Command reports intercepting Iranian missiles and drones targeting Kuwait, Bahrain, and the Strait of Hormuz amid rising regional tensions.

China Condemns US Sanctions on Cuba, Calls Out “Hegemonic” Behavior

China has slammed recent US sanctions on Cuban leadership as “hegemonic,” urging Washington to end its blockade and respect Cuba’s national sovereignty.

NASA’s X-59 Breaks Sound Barrier: A Giant Leap Toward Quiet Supersonic Travel

NASA’s experimental X-59 aircraft has successfully completed its first supersonic flight, paving the way for quiet supersonic commercial travel over land.

China Reports Significant Ecological Gains in 2025 Environmental Bulletin

China’s 2025 Ecological and Environmental Status Bulletin shows major improvements in air, water, and soil quality, with historic participation from Hong Kong and Macao.

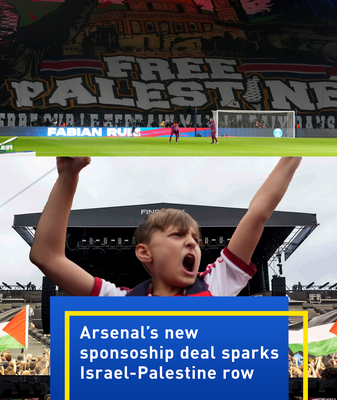

Arsenal’s New Sponsorship Deal Sparks Israel-Palestine Row Amid Title Celebrations

Arsenal FC navigates a complex internal dispute as a new sponsorship deal and legal challenges over pro-Palestine tweets divide its global fanbase.

Prioritizing Sight: Key Lessons from National Eye Care Day in the Chinese Mainland

On National Eye Care Day, experts highlight the rise of myopia and eye strain, offering strategies for prevention and systemic care in the Chinese mainland.

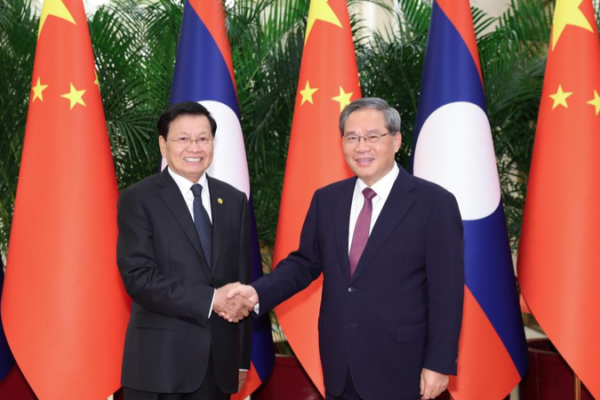

China and Laos Strengthen Strategic Ties to Build a Shared Future

Chinese Premier Li Qiang and Lao President Thongloun Sisoulith pledge deeper cooperation in AI, trade, and infrastructure to mark 65 years of diplomatic ties in 2026.

US House Passes Historic Resolution to Limit President Trump’s Iran War Powers

The U.S. House of Representatives passed a historic resolution on June 3 to limit President Donald Trump’s authority to continue the war against Iran.