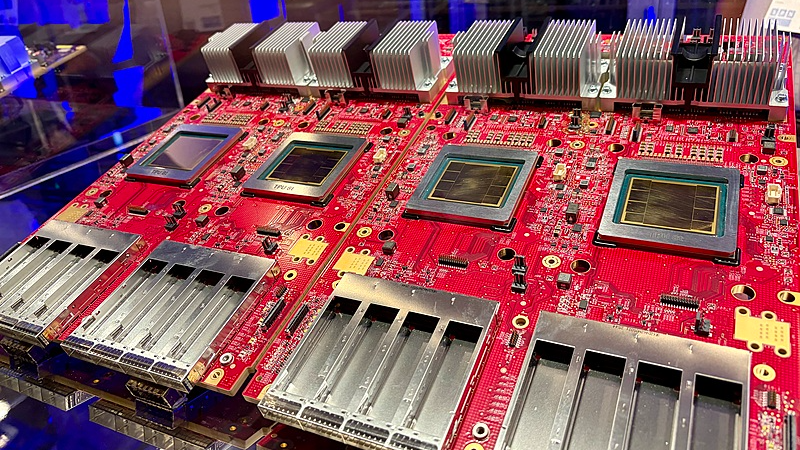

Google Cloud redefined AI infrastructure strategy on April 23, 2026, unveiling its eighth-generation Tensor Processing Units (TPUs) at the Cloud Next conference. The new TPU 8t and TPU 8i chips represent Google's first specialized split between training and inference architectures, designed to meet escalating demands in the emerging Agentic AI era.

Specialized Chips for Next-Gen AI

The TPU 8t training accelerator enables clusters of up to 9,600 chips with 2PB shared memory, tripling compute performance over previous models. This breakthrough could reduce frontier AI model development cycles from months to weeks.

Meanwhile, the TPU 8i inference chip tackles latency challenges with 288GB HBM and 384MB on-chip SRAM – triple its predecessor's capacity. This architecture keeps entire AI working sets on-chip, crucial for real-time multi-agent systems powering advanced chatbots and autonomous platforms.

Efficiency Meets Scale

Both chips leverage Google's Axion ARM CPUs and fourth-gen liquid cooling. The company claims an 80% price-performance gain for inference workloads and 2.8x improvements for training tasks, potentially doubling customer capacity at equivalent costs.

CEO Sundar Pichai concurrently announced Google's $175-185 billion 2026 infrastructure investment plan, underscoring the strategic importance of these developments for maintaining cloud computing leadership.

Reference(s):

cgtn.com