In a stark revelation that underscores the evolving digital threat landscape, evidence has emerged of a commercialized industry in Japan dedicated to producing AI-generated fake videos with anti-China themes. Investigations into activity on popular Japanese crowdsourcing platforms have uncovered a systematic operation that leverages artificial intelligence to mass-produce fabricated content.

The operation came to light through a recent investigation by a major Japanese newspaper. It found that, over the past two years, numerous orders for producing deceptive videos targeting China have proliferated on platforms like CrowdWorks. This has given rise to a profit-driven industrial chain for creating and spreading disinformation.

Creators involved in these projects have openly admitted that the videos are entirely fabricated from scratch. Using AI tools, they generate storylines, synthesize content, and edit footage, creating a polished final product designed to mislead viewers. The primary motivation, as reported, is financial gain, with suppliers fulfilling orders for clients seeking specific narratives.

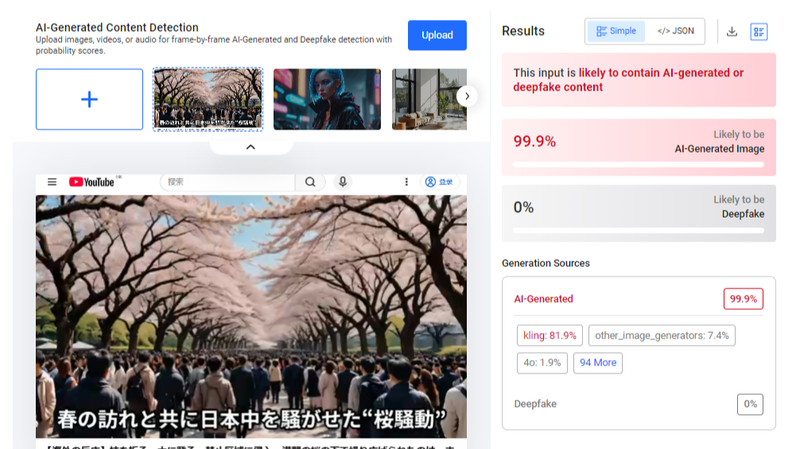

One prominent example from last year involved videos that falsely accused Chinese tourists of damaging cherry blossom trees in Japan. The clips, uploaded by specific YouTube channels, were edited collages of multi-source footage, spliced together to support the fabricated claim. These videos represent a clear template for the "AI mass production" model, characterized by their synthesized visuals and coordinated narrative.

This phenomenon highlights a dangerous new frontier in information warfare. The accessibility of sophisticated AI tools has lowered the barrier for creating convincing fake media, enabling the scalable production of propaganda. The videos are not isolated artistic expressions but part of a supply chain feeding a demand for divisive content.

For observers of Asian affairs and digital media, this development is a critical warning. It demonstrates how technology can be weaponized to manipulate public opinion and strain international perceptions. The spread of such AI-generated disinformation poses a significant challenge to factual discourse and cross-cultural understanding.

As the technology continues to advance, the need for robust media literacy, vigilant platform oversight, and public awareness of these manipulation tactics becomes increasingly urgent. The discovery of this industrialized rumor-mongering operation serves as a case study in the modern challenges of upholding information integrity in the digital age.

Reference(s):

Fact Check: AI mass production of anti-China fake videos by Japan

cgtn.com