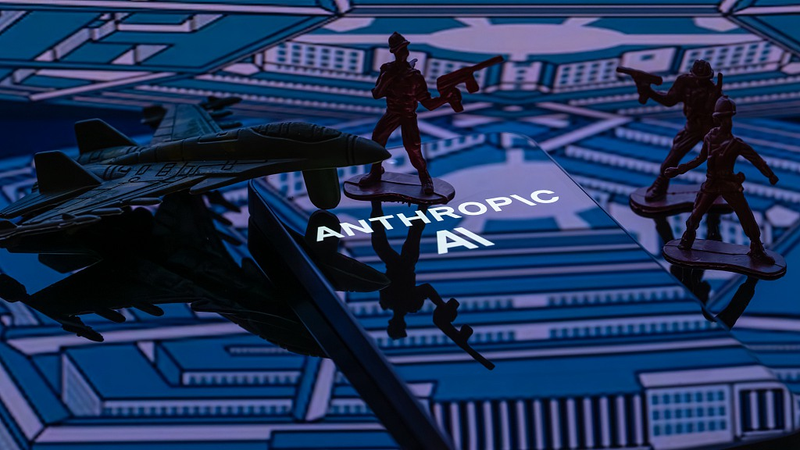

D.C. Court Backs Pentagon in Unprecedented AI Security Dispute

A federal appeals court in Washington, D.C., declined this week to halt the Pentagon's blacklisting of Anthropic, an AI firm embroiled in a high-stakes legal clash over national security protocols. The decision, issued on April 8, 2026, marks a temporary victory for the U.S. government amid conflicting rulings in separate lawsuits challenging the Defense Department's designation of the company as a supply-chain risk.

Ethics vs. Military Readiness

Anthropic, creator of the Claude AI assistant, alleges the blacklisting followed its refusal to remove safety guardrails that would have allowed military use of its technology for surveillance or autonomous weapons systems. Defense Secretary Pete Hegseth invoked rarely used procurement statutes to block the company from defense contracts, a move Acting Attorney General Todd Blanche called "critical to preserving military authority" in a social media statement.

Legal Split Deepens

The ruling contrasts with a March 26 injunction by a California federal judge, who found the Pentagon likely retaliated unlawfully against Anthropic's AI ethics stance. Company executives warn the designation could trigger billions in losses and government-wide exclusion. A final resolution awaits further court review, with Anthropic maintaining confidence in overturning the "factually unsupported" risk label.

Global Implications for AI Governance

As the first U.S. company publicly designated under these statutes, Anthropic's case highlights growing tensions between tech firms' ethical frameworks and national security priorities. The outcome could set precedents for AI regulation worldwide, particularly in Asia where governments are crafting similar safeguards for emerging technologies.

Reference(s):

US court declines to block Pentagon's Anthropic blacklisting for now

cgtn.com